CJF names shortlist for inaugural CJF-Hinton Award for Excellence in AI Safety Reporting

Toronto, April 8, 2026 – The Canadian Journalism Foundation (CJF) is proud to announce its shortlist for the inaugural CJF Hinton Award for Excellence in AI Safety Reporting.

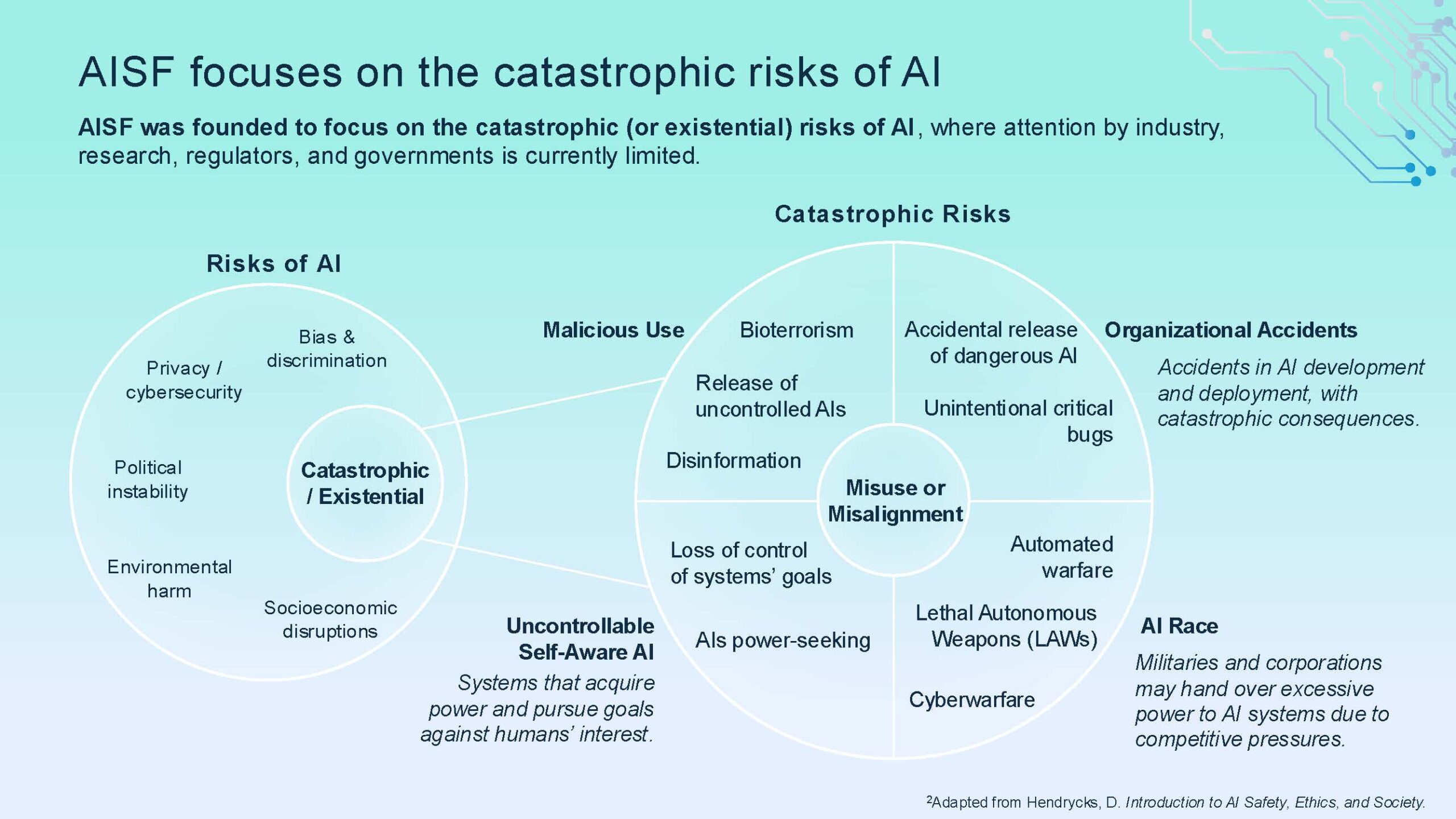

Launched in October 2025 in partnership with the AI Safety Foundation, the award is named for Nobel laureate Dr. Geoffrey Hinton, a pioneer of modern deep learning and a prominent voice alerting governments and the public to artificial intelligence’s (AI’s) potential threat to humankind. It recognizes exceptional journalism that critically examines the safety implications of AI, a transformative technology with far-reaching societal impacts. It also honours reporting that not only identifies challenges but explores innovative solutions and pathways to mitigate risks, advancing public conversation on how AI can be developed and applied responsibly. The goal of this award is to promote thoughtful, evidence-based reporting that raises awareness of catastrophic risks associated with AI, fosters public understanding, and encourages dialogue on creating a safer future for humanity. The winner receives $10,000.

“AI’s risks are no longer theoretical. They are immediate, complex and deeply consequential. This year’s shortlisted entries confront some of the most urgent safety challenges of our time, including non-consensual deepfakes and coordinated disinformation, with rigour, originality and real-world impact. This is exactly the kind of reporting this award was created to recognize,” says Natalie Turvey, CJF president and executive director.

The three finalists for this year’s award and the stories or series shortlisted are:

Nam Kiwanuka and the team at TVO’s The Thread with Nam Kiwanuka (Chantale Dahmer, Ali Zaidi and Diego A. Garcia) for How AI is turning your image into an explicit deepfake. The episode examines non-consensual deepfakes targeting girls, featuring Toronto students’ experiences, expert insights, legal gaps, policing challenges and advocacy for protections and digital rights reform. “The terrorization of women at scale with near-zero accountability was palpable. The situation it presented felt like a civilizational step backwards from the progress we have made towards gender equality,” remarks jury member Matthew Lee. “The piece made clear that the proliferation of AI tools capable of generating nonconsensual intimate imagery cannot be adequately addressed through legal prohibition or platform moderation alone.”

Craig Silverman, co-founder of Indicator, with co-founder Alexios Mantzarlis, and co-authors Santiago Lakatos and Benjamin Shultz for three articles from Indicator’s body of reporting on AI nudifiers. These tools of abuse allow anyone to turn a single photo of a person into a realistic pornographic image or video without the subject’s consent, and are used by millions of people around the world to abuse women and girls. Jury member Cillian Crosson calls the series “impressive accountability journalism,” noting “Their reporting has already produced concrete results — Google revoked SSO for 23 sites and Meta removed thousands of ads promoting nudifying sites.”

Rory White, of Canada’s National Observer, with additional contributions from Managing Editor David McKie for a three part investigative series, uncovering a group that used artificial intelligence to create persuasive climate disinformation targeting local politicians across Canada, exploring cross-governmental knowledge sharing as a solution and introducing a purpose-built tool that makes coordinated messaging detectable across over 500 local governments, which enabled a real-time intervention in Cochrane, Alberta, where councillors voted to remain in the program. Jury chair Nikita Roy calls the series “A strong solutions-oriented investigation that not only exposes but demonstrates a real-world intervention.”

This award is supported by the AI Safety Foundation and by a generous gift from philanthropists Richard Wernham and Julia West.